Gemini Embedding 2 — How Multimodal Embeddings Change RAG

A deep dive into Google

Why Multimodal Embeddings

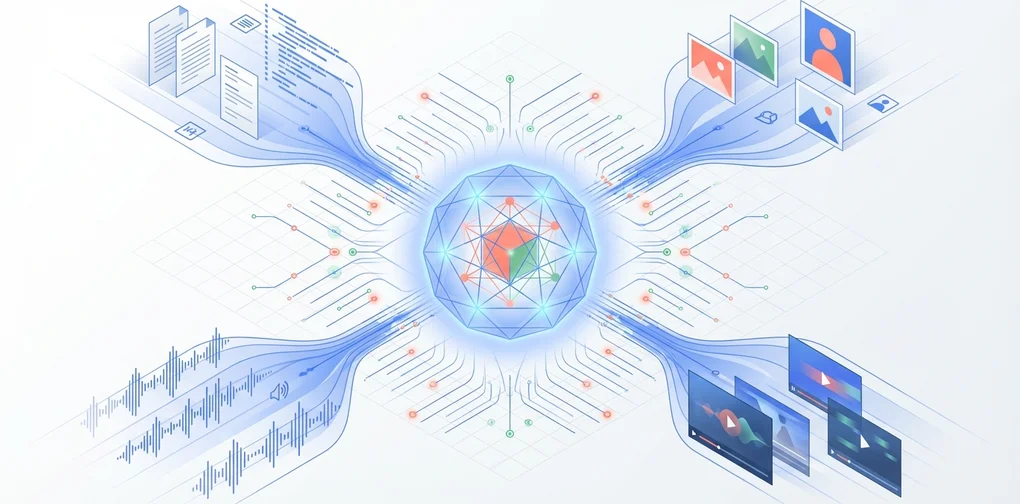

On March 10, 2026, Google announced Gemini Embedding 2 — described as “our first native multimodal embedding model.” It maps text, images, video, audio, and documents into a single vector space.

The biggest limitation of existing RAG pipelines was that they could only handle text. Even when an internal wiki contained diagrams, or a product manual included screenshots, all of that was ignored at the embedding stage. As a result, searches repeatedly failed to surface relevant information despite it being present in the knowledge base.

Gemini Embedding 2 addresses this problem at its root.

Gemini Embedding 2 Key Specs

Input Modalities

| Modality | Coverage | Constraints |

|---|---|---|

| Text | Up to 8,192 tokens | 100+ languages supported |

| Images | Up to 6 per request | PNG, JPEG |

| Video | Up to 120 seconds | MP4, MOV |

| Audio | Native processing | No intermediate text conversion needed |

| Documents | Complex docs like PDFs | Mixed text + image processing |

Output Dimensions

The default output is a 3,072-dimensional vector. The key here is the application of Matryoshka Representation Learning (MRL). Like Russian nesting dolls, information is arranged in a nested structure, so core information is preserved in the higher dimensions even when the dimensionality is reduced.

3072 dimensions (highest precision)

└── 1536 dimensions (high precision)

└── 768 dimensions (general purpose)

└── 256 dimensions (lightweight, mobile/edge)The reason this matters in practice is that it allows flexible tuning of the cost-accuracy tradeoff. When indexing millions of documents, a two-stage strategy becomes feasible: first-pass filtering with 256 dimensions, then re-ranking top candidates with 3,072 dimensions.

API Access

Two gateways are available:

- Gemini API (AI Studio): For prototyping and individual developers. Includes a free tier.

- Vertex AI (Google Cloud): Enterprise scale. VPC-SC, CMEK, and IAM integration.

Comparison with Existing Embedding Models

Single-Modal vs. Multimodal

graph TD

subgraph Legacy["Legacy Pipeline"]

T1["Text Embedding<br/>(text-embedding-3)"] --> VS1["Vector Store"]

I1["Image Embedding<br/>(CLIP)"] --> VS2["Separate Vector Store"]

A1["Audio<br/>(Whisper → Text)"] --> T1

end

subgraph New["Gemini Embedding 2"]

T2["Text"] --> GE["Unified Embedding Model"]

I2["Image"] --> GE

V2["Video"] --> GE

A2["Audio"] --> GE

GE --> VS3["Single Vector Store"]

endThree problems with the legacy approach:

- Pipeline complexity: Each modality required a separate model, separate store, and separate retrieval logic

- No cross-modal search: Queries like “find code related to this diagram” were impossible

- Intermediate conversion loss: Converting audio to text lost nuance and context

Embedding Model Spec Comparison

| Model | Modalities | Max Dimensions | MRL | Price (per 1M tokens) |

|---|---|---|---|---|

| OpenAI text-embedding-3-large | Text only | 3,072 | Yes | $0.13 |

| Cohere embed-v4 | Text + Image | 1,024 | Yes | $0.10 |

| Gemini Embedding 2 | Text + Image + Video + Audio | 3,072 | Yes | Free (preview) |

| Voyage AI voyage-3 | Text only | 1,024 | No | $0.06 |

Gemini Embedding 2’s differentiator is clear. It is the only model to natively support all four modalities, with top-tier output dimensions, and is currently free during the preview period.

Practical Application: Building a Multimodal RAG Pipeline

Architecture Design

graph TD

subgraph Ingestion["Data Ingestion"]

DOC["Internal Docs<br/>(PDF, Wiki)"]

IMG["Images<br/>(Diagrams, Screenshots)"]

VID["Meeting Recordings<br/>(MP4)"]

AUD["Customer Calls<br/>(Voice)"]

end

subgraph Embedding["Embedding Processing"]

DOC --> GE2["Gemini Embedding 2<br/>API"]

IMG --> GE2

VID --> GE2

AUD --> GE2

end

subgraph Storage["Vector Storage"]

GE2 --> PG["pgvector /<br/>Pinecone /<br/>Weaviate"]

end

subgraph Retrieval["Retrieval & Generation"]

Q["User Query"] --> QE["Query Embedding"]

QE --> PG

PG --> RR["Re-ranking"]

RR --> LLM["Gemini Pro /<br/>Claude"]

LLM --> ANS["Response"]

endCode Example: Python SDK

from google import genai

# Initialize client

client = genai.Client(api_key="YOUR_API_KEY")

# Text embedding

text_result = client.models.embed_content(

model="gemini-embedding-exp-03-07",

contents=["Key clauses from the internal security policy document"],

config={

"output_dimensionality": 768, # Reduce dimensions via MRL

"task_type": "RETRIEVAL_DOCUMENT"

}

)

print(f"Text vector dimensions: {len(text_result.embeddings[0].values)}")

# Output: Text vector dimensions: 768

# Image embedding (same vector space)

from google.genai import types

image = types.Part.from_uri(

file_uri="gs://my-bucket/architecture-diagram.png",

mime_type="image/png"

)

image_result = client.models.embed_content(

model="gemini-embedding-exp-03-07",

contents=[image]

)

# Cosine similarity between text and image vectors is now possible

import numpy as np

def cosine_similarity(a, b):

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

similarity = cosine_similarity(

text_result.embeddings[0].values,

image_result.embeddings[0].values

)

print(f"Text-image similarity: {similarity:.4f}")Task Type Strategy

Gemini Embedding 2 lets you specify the embedding purpose using the task_type parameter:

| Task Type | Purpose | Use Case |

|---|---|---|

RETRIEVAL_DOCUMENT | Document indexing | When storing RAG documents |

RETRIEVAL_QUERY | Query encoding | When processing user search queries |

SEMANTIC_SIMILARITY | Similarity comparison | Duplicate detection, clustering |

CLASSIFICATION | Classification | Automatic document classification, labeling |

CLUSTERING | Clustering | Topic modeling, grouping |

Pro tip: Always use different task types for indexing and retrieval. Using RETRIEVAL_DOCUMENT when storing documents and RETRIEVAL_QUERY when querying significantly improves asymmetric retrieval performance.

EM/CTO Perspective: Adoption Considerations

1. Pipeline Simplification = Reduced Operational Costs

The most direct benefit of adopting multimodal embeddings is reduced pipeline complexity.

If you currently operate separate embedding pipelines per modality:

- 3–4 models → 1 model

- 2–3 vector stores → 1 vector store

- Synchronization logic eliminated

- Fewer systems to monitor

According to Google’s official blog, some customers have achieved 70% latency reduction.

2. Vendor Dependency Assessment

Gemini Embedding 2 is currently Google-exclusive. For organizations running a multi-cloud strategy:

- Abstract the embedding layer: Design the embedding model as a swappable interface

- Vector format compatibility: 3,072-dimension vectors are compatible with most vector databases

- Leverage MRL: Dimension reduction makes it possible to match dimensions with other models

3. Data Governance

Sending multimodal data to an external API introduces governance considerations:

- On Vertex AI, VPC Service Controls can define data perimeters

- CMEK (Customer-Managed Encryption Keys) is supported

- PII masking is recommended before embedding meeting recordings and customer call audio

- If Data Residency requirements apply, confirm region selection carefully

4. Cost Model Forecasting

The model is currently free during preview, but billing is expected after GA. Cost optimization strategy:

Indexing: 256 dimensions (MRL) → 87% storage cost reduction vs. 3072

First pass: 256-dim ANN search → fast and inexpensive

Re-ranking: 3072-dim exact match → top 50 candidates onlyThis two-stage strategy simultaneously optimizes cost and accuracy at the scale of millions of documents.

Production Migration Checklist

When transitioning from a text-only RAG to a multimodal RAG:

- Data inventory: Assess the current state of non-text data in your organization (images, video, audio)

- Prioritization: Apply multimodal indexing first to document types with the highest search failure rate

- Vector DB compatibility: Confirm your existing vector store supports 3,072 dimensions (pgvector, Pinecone, and Weaviate all do)

- A/B testing: Quantitatively compare retrieval accuracy between existing text-only and multimodal embeddings

- Monitoring: Track cross-modal search rate, latency, and embedding API call volume

- Security review: Obtain security and compliance approval for external transmission of multimodal data

Conclusion

Gemini Embedding 2 is not simply “a new embedding model.” It is a paradigm shift in RAG pipeline architecture.

Search systems that could previously only handle text can now perform unified retrieval across images, video, and audio within the same vector space. This is not just a technical advancement — it is a change that can fundamentally transform how organizations leverage unstructured data.

Key action items from an Engineering Manager’s perspective:

- Now: Run a PoC using the Gemini API during the preview period (free)

- Within 1–2 weeks: Create an inventory of non-text data within your organization

- Within 1 month: Design an A/B test comparing multimodal RAG against your existing RAG pipeline

References

Was this helpful?

Your support helps me create better content. Buy me a coffee! ☕