Hindsight — Open-Source MCP Memory That Gives AI Agents Learning

Analyzing the architecture, core capabilities, and production deployment strategies of the Hindsight MCP memory system that solves the memory problem for AI agents.

The Memory Problem with AI Agents

Any Engineering Manager who has deployed AI agents to production has likely experienced this at least once. You ask the agent, “Do you remember what we discussed yesterday?” and it just stares back blankly. Once a conversation ends, all context vanishes, and the next session starts from scratch.

There have been many attempts to solve this problem with RAG (Retrieval-Augmented Generation) or simple vector databases, but most stopped at “retrieval” without advancing to “learning.” Simply searching past conversations is fundamentally different from extracting patterns from experience and forming mental models.

Hindsight is an open-source project that tackles this problem head-on. Compatible with MCP (Model Context Protocol), it integrates immediately with major AI tools like Claude, Cursor, and VS Code. It achieved 91.4% on the LongMemEval benchmark, making it the first agent memory system to break the 90% barrier.

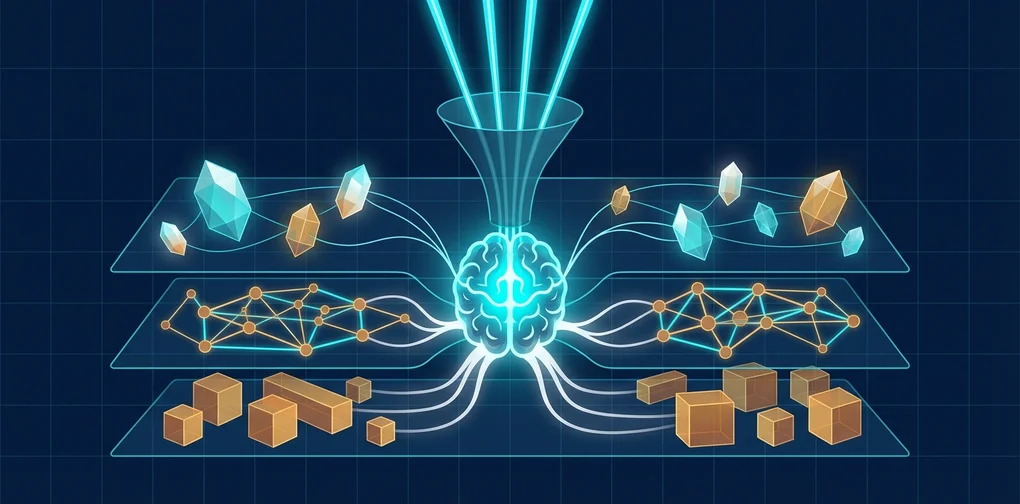

Hindsight’s Architecture

Hindsight organizes memory using biomimetic data structures inspired by human cognitive architecture.

graph TD

subgraph Memory Types

W["World<br/>Facts about the environment"] ~~~ E["Experiences<br/>Agent interactions"]

end

subgraph Processing Layers

F["Fact Extraction<br/>Extract facts"]

ER["Entity Resolution<br/>Resolve entities"]

KG["Knowledge Graph<br/>Build knowledge graph"]

end

subgraph Higher Cognition

MM["Mental Models<br/>Learned understanding"]

end

W --> F

E --> F

F --> ER

ER --> KG

KG --> MMMemory is divided into three main layers:

- World: Facts about the environment (“The stove is hot”)

- Experiences: Records of the agent’s own interactions (“I touched the stove and it was hot”)

- Mental Models: Learned understanding formed by reflecting on raw memories

The key difference from existing RAG systems is precisely these Mental Models. Rather than simply storing and retrieving data, the system analyzes memories and forms patterns, creating a structure where agents “learn from experience.”

Three Core Operations

Retain — Storing Memories

This is not simple text storage. Retain uses an LLM to automatically extract and normalize facts, temporal information, entities, and relationships from the input content.

from hindsight_client import Hindsight

client = Hindsight(base_url="http://localhost:8888")

# Stored as structured memory, not plain text

client.retain(

bank_id="project-alpha",

content="Kim (team lead) completed the auth module refactoring in Sprint 23. "

"Migrated from session-based to JWT, improving response time by 40%.",

context="sprint-retrospective",

timestamp="2026-03-15T10:00:00Z"

)With this single call, Hindsight internally performs the following:

- Entity extraction: “Kim (team lead)”, “Sprint 23”, “auth module”

- Relationship mapping: “Kim (team lead) -> completed -> auth module refactoring”

- Fact normalization: “session -> JWT migration”, “40% response time improvement”

- Temporal indexing: recorded as an event that occurred on 2026-03-15

- Vector embedding generation and knowledge graph update

Recall — Retrieving Memories

Recall executes four parallel retrieval strategies simultaneously:

graph TD

Q["Query"] --> S["Semantic<br/>Vector similarity"]

Q --> K["Keyword<br/>BM25 matching"]

Q --> G["Graph<br/>Entity/causal links"]

Q --> T["Temporal<br/>Time range filter"]

S --> RRF["Reciprocal Rank<br/>Fusion"]

K --> RRF

G --> RRF

T --> RRF

RRF --> CE["Cross-Encoder<br/>Reranking"]

CE --> R["Final Results"]result = client.recall(

bank_id="project-alpha",

query="What are the recent changes related to authentication?",

max_tokens=4096

)The results from all four strategies are merged using Reciprocal Rank Fusion, and the final ranking is determined through Cross-Encoder Reranking.

Reflect — Reflection and Learning

Reflect is the core capability that elevates Hindsight from a simple memory system to a “learning system.”

insight = client.reflect(

bank_id="project-alpha",

query="Are there recurring patterns in our team's sprint retrospectives?",

)Reflect comprehensively analyzes stored memories to:

- Discover recurring patterns

- Infer causal relationships between multiple memories

- Automatically update Mental Models

MCP Integration: Getting Started in 5 Minutes

Installation and Execution

export OPENAI_API_KEY=sk-xxx

docker run --rm -it --pull always \

-p 8888:8888 -p 9999:9999 \

-e HINDSIGHT_API_LLM_API_KEY=$OPENAI_API_KEY \

-v $HOME/.hindsight-docker:/home/hindsight/.pg0 \

ghcr.io/vectorize-io/hindsight:latest- Port 8888: API + MCP endpoint

- Port 9999: Admin UI

MCP Client Configuration

{

"mcpServers": {

"hindsight": {

"type": "http",

"url": "http://localhost:8888/mcp/my-project/"

}

}

}Supported LLM Providers

| Provider | Config Value | Notes |

|---|---|---|

| OpenAI | openai | Default |

| Anthropic | anthropic | Claude |

gemini | Gemini | |

| Groq | groq | Fast inference |

| Ollama | ollama | Local |

| LM Studio | lmstudio | Local |

Deployment Strategy from an Engineering Manager’s Perspective

Phase 1: Start with a Personal Agent

Use it for 2〜3 weeks and observe the reduction in repetitive questions, context-switching adaptation speed, and mental model quality.

Phase 2: Build Team Shared Memory

graph TD

subgraph Per-Member Agents

A["Developer A<br/>Agent"] ~~~ B["Developer B<br/>Agent"] ~~~ C["Developer C<br/>Agent"]

end

subgraph Hindsight Server

P["team-project<br/>bank"]

S["sprint-retro<br/>bank"]

D["decisions<br/>bank"]

end

A --> P

B --> P

C --> P

A --> S

B --> S

C --> SSeparate banks by purpose:

- team-project: Codebase, architecture decisions, tech stack information

- sprint-retro: Sprint retrospectives, velocity metrics, recurring issues

- decisions: ADRs, rationale behind technology choices

Phase 3: Operational Monitoring

Practical Use Case Scenarios

Scenario 1: Accelerating Onboarding

Scenario 2: Automated Sprint Retrospective Analysis

Scenario 3: Technical Decision Tracking

Comparison with Existing Approaches

| Feature | Simple Vector DB | RAG | Knowledge Graph | Hindsight |

|---|---|---|---|---|

| Storage | Embeddings only | Document chunking + embeddings | Entities + relationships | Facts + entities + time series + vectors |

| Retrieval | Vector similarity only | Vector + keyword | Graph traversal | Quad-parallel retrieval + reranking |

| Learning | None | None | Limited | Automatic Mental Model formation |

| Time Awareness | None | Limited | Limited | Native temporal indexing |

| Benchmark | - | - | - | LongMemEval 91.4% |

Points to Consider

- Processing Latency: If you recall immediately after retain, processing may not yet be complete.

- LLM Costs: Internal processing requires separate LLM calls.

- Data Security: Memories may contain sensitive information.

- Mental Model Quality: Automatically generated mental models are not always accurate.

Conclusion

Hindsight is a project that represents meaningful progress in the field of AI agent memory. It is open source under the MIT license, and you can get started in 5 minutes with a single Docker command.

References

Was this helpful?

Your support helps me create better content. Buy me a coffee! ☕