IBM's $11B Confluent Buy — Real-Time Data Fuels AI Agents

IBM acquired Confluent for $11 billion, elevating real-time data streaming as core infrastructure for AI agents. A CTO-level analysis of what this deal means and how engineering orgs should respond.

Overview

On March 17, 2026, IBM completed its acquisition of data streaming platform company Confluent for $11 billion. With Confluent’s Apache Kafka-based platform — used by over 40% of Fortune 500 companies — now integrated into the IBM watsonx ecosystem, real-time data streaming has cemented itself as the core infrastructure for enterprise AI agents.

This acquisition goes beyond a routine M&A deal. It clearly signals the direction in which data architecture is evolving in the AI era. From Engineering Managers to CTOs, here is an analysis of how engineering leaders should interpret this shift.

Why Real-Time Data — The “Data Latency Gap” Problem

Limitations of Traditional AI Systems

Most enterprise AI systems run on batch processing. They collect data, refine it through ETL (Extract, Transform, Load) pipelines, and then feed it into models.

[Production DB] → [ETL Pipeline] → [Data Warehouse] → [AI Model]

Hours〜days of latencyIn this architecture, the data an AI model references is always a “snapshot of the past.” It cannot reflect real-time changes in market conditions, customer behavior, or system state.

What AI Agents Demand

AI agents in 2026 are not simple chatbots that answer questions. They are autonomous systems that make judgments, take actions, and verify outcomes. If these agents base their decisions on “yesterday’s data,” the results cannot be trusted.

graph TD

subgraph Traditional Approach

A["Batch Data<br/>(Hours old)"] --> B["AI Model"]

B --> C["Generate Response"]

end

subgraph Real-Time Approach

D["Live Stream<br/>(Millisecond latency)"] --> E["AI Agent"]

E --> F["Autonomous Judgment"]

F --> G["Immediate Action"]

G -.-> D

endClosing this “Data Latency Gap” is precisely why IBM acquired Confluent.

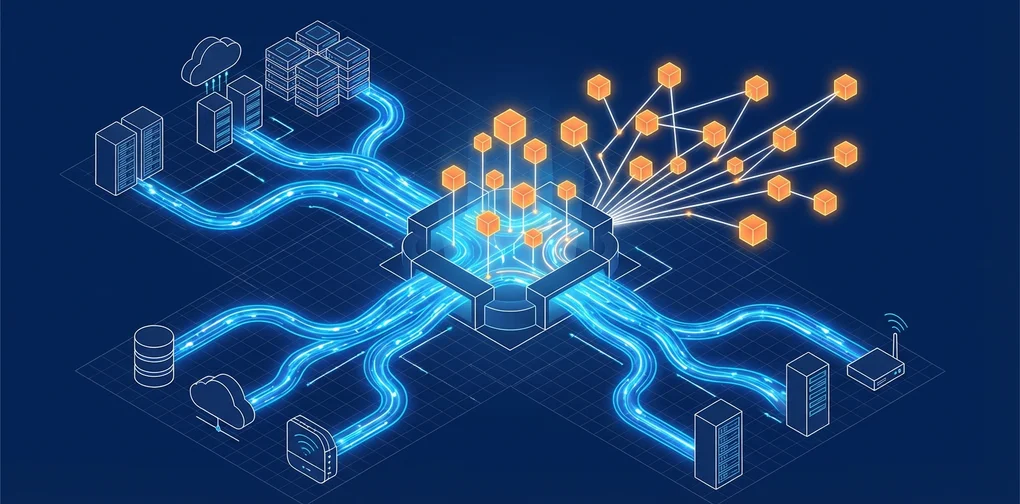

IBM + Confluent Integration Architecture

Key Integration Points

IBM SVP Rob Thomas described this acquisition as “the final piece of the Agentic AI puzzle.” Here is the concrete integration architecture:

graph TD

subgraph Data Sources

A["Production DB"] ~~~ B["IoT Sensors"] ~~~ C["SaaS Apps"]

end

subgraph Confluent

D["Apache Kafka<br/>Event Streaming"]

E["Tableflow<br/>Stream-to-Table Conversion"]

end

subgraph IBM watsonx

F["watsonx.data<br/>Lakehouse"]

G["watsonx.ai<br/>AI Models"]

H["watsonx.governance<br/>Governance"]

end

subgraph Agent Layer

I["AI Agent<br/>Autonomous Decision-Making"]

end

A --> D

B --> D

C --> D

D --> E

E --> F

F --> G

G --> I

H -.-> G

H -.-> I

I -.-> DZero-Copy Data Sharing

The most noteworthy technology is the integration of Confluent’s Tableflow with watsonx.data.

Previously, using Kafka’s streaming data in AI models required a separate ETL step. With Tableflow, you can query Kafka streams directly as if they were database tables.

# Before: ETL pipeline required

raw_data = kafka_consumer.poll()

transformed = etl_pipeline.transform(raw_data)

warehouse.insert(transformed)

result = ai_model.predict(warehouse.query("SELECT * FROM orders"))

# With Tableflow integration: zero-copy direct query

result = ai_model.predict(

watsonx_data.query("SELECT * FROM kafka_stream.orders")

)This approach eliminates ETL costs, reduces data latency to milliseconds, and enables AI agents to always act on the freshest data available.

Strategic Implications for CTOs and VPoEs

1. The Rise of the “Live Agentic AI” Paradigm

This acquisition signals the direction of the entire industry. A “Live Agentic AI” paradigm — where AI agents operate on live event streams rather than static data — is now taking hold.

Practical impact:

- Evaluate migrating existing batch-based ML pipelines to streaming architectures

- Build Kafka and event-streaming competencies within data engineering teams

- Recognize that AI agent decision quality is directly tied to data freshness

2. The Critical Role of Governance and Lineage

When real-time data directly influences AI agent decision-making, the importance of data governance rises sharply.

graph TD

A["Live Data Stream"] --> B{"Governance Gate"}

B -->|"Approved"| C["AI Agent"]

B -->|"Rejected"| D["Audit Log"]

C --> E["Autonomous Action"]

E --> F["Lineage Tracking"]

F -.-> DCheckpoints:

- Build data lineage tracking systems

- Preserve the evidence behind AI agent decisions in an auditable format

- Apply Policy-Based Access Control (PBAC)

3. Vendor Lock-In vs. Open Source Strategy

IBM’s integrated platform is powerful, but it comes with vendor lock-in risk. Alternative strategies a CTO should consider:

| Approach | Pros | Cons |

|---|---|---|

| IBM Full Stack (Confluent + watsonx) | Unified management, built-in governance | High cost, vendor lock-in |

| OSS Combo (Kafka + custom AI) | Flexibility, cost savings | Integration complexity, DIY governance |

| Hybrid (Confluent Cloud + multi-AI) | Unified data layer, AI flexibility | Complex architecture management |

4. Organizational Capability Transformation

This shift is not just a technology problem. It also requires a transformation of organizational structure and capabilities.

Evolving role of data engineering teams:

- Batch ETL operations → Event streaming architecture design

- Data warehouse management → Real-time data pipeline operations

- Static reporting → Optimizing data feeds for AI agents

Expanded role of AI/ML engineers:

- Model training/deployment → Agent orchestration

- Offline evaluation → Real-time monitoring and feedback loop design

Practical Application: Getting Started

You do not need IBM-Confluent-scale infrastructure to apply the real-time data + AI agent pattern. It works at a small scale too.

Minimal Setup Example

# docker-compose.yml (minimal real-time AI agent stack)

services:

kafka:

image: confluentinc/cp-kafka:latest

ports:

- "9092:9092"

agent-worker:

build: ./agent

environment:

- KAFKA_BOOTSTRAP_SERVERS=kafka:9092

- LLM_API_KEY=${LLM_API_KEY}

depends_on:

- kafka

monitoring:

image: grafana/grafana:latest

ports:

- "3000:3000"Event-Driven AI Agent Pattern

from confluent_kafka import Consumer

import anthropic

client = anthropic.Anthropic()

consumer = Consumer({

'bootstrap.servers': 'localhost:9092',

'group.id': 'ai-agent-group',

'auto.offset.reset': 'latest'

})

consumer.subscribe(['business-events'])

while True:

msg = consumer.poll(1.0)

if msg is None:

continue

event = json.loads(msg.value())

# AI agent makes judgments based on real-time events

response = client.messages.create(

model="claude-sonnet-4-6",

max_tokens=1024,

messages=[{

"role": "user",

"content": f"Analyze the following business event and suggest actions: {event}"

}]

)

# Publish the agent's decision back as an event

producer.produce(

'agent-decisions',

json.dumps({"event": event, "decision": response.content})

)Conclusion

IBM’s acquisition of Confluent sends a clear message: “The data infrastructure for the AI agent era must be real-time.” The $11 billion price tag proves that real-time data streaming is not just a technology trend — it is foundational infrastructure for enterprise AI.

Actions engineering leaders can take right now:

- Audit your current data architecture’s latency — Measure how “fresh” the data your AI agents reference actually is

- Run a streaming PoC — Apply a Kafka-based streaming pilot to your most time-sensitive workflows

- Design a governance framework — Establish policies and audit mechanisms before real-time data feeds into AI decision-making

- Build a team capability roadmap — Plan cross-functional skill development across data engineering and AI engineering

From batch to streaming, from chatbots to agents — the relationship between data and AI is being fundamentally redefined.

References

Was this helpful?

Your support helps me create better content. Buy me a coffee! ☕