Jira AI Agents and MCP — What Engineering Managers Need to Know

Atlassian has officially launched AI agents in Jira and adopted MCP platform-wide. Here's what engineering managers need to prepare for organizational change.

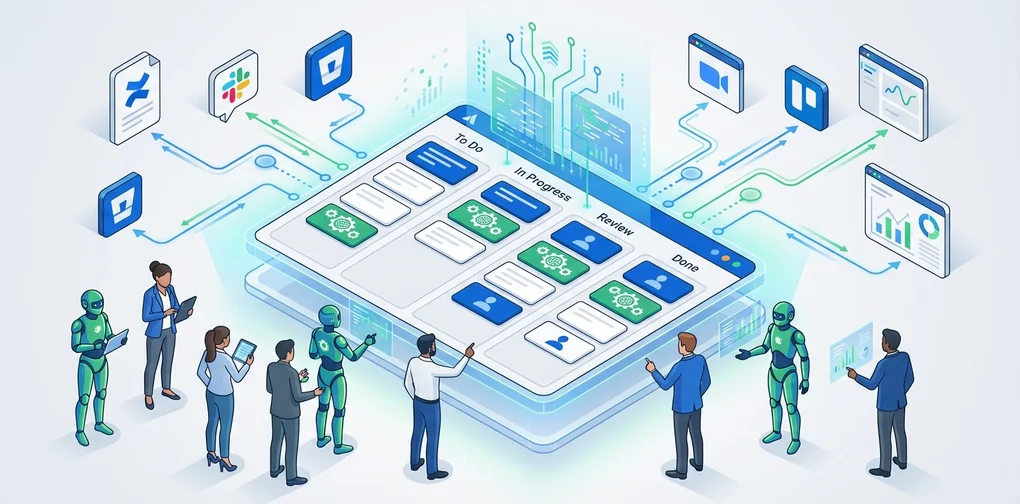

Overview

On February 25, 2026, Atlassian officially launched AI agents in Jira. This isn’t just another chatbot feature. AI agents now operate directly within the Jira workflow — they receive task assignments, collaborate via comments, and automatically execute workflow steps.

Simultaneously, Atlassian has embraced the Model Context Protocol (MCP) across its platform, enabling external AI agents like GitHub Copilot, Claude, and Gemini to connect directly with Jira alongside Rovo (Atlassian’s own AI).

From an engineering manager’s perspective, this isn’t just a feature update. It’s a signal that your team’s operating model is about to change. This article breaks down what’s shifting and how to prepare.

What’s Changed — Three Core Capabilities of Jira AI Agents

1. Assigning work to AI agents like team members

You can now assign Jira issues to AI agents using the same assignee field as humans. This means you can integrate AI without disrupting existing workflows.

# Traditional workflow

Issue created → Assign to developer → Work → Code review → Done

# AI agent integrated workflow

Issue created → Assign to AI agent (draft/research) → Developer review → Work → Done2. Collaboration via @mention in comments

You can @mention AI agents in issue comments to get summaries, research, and solution proposals within the issue’s context. No need to switch tools — AI collaboration happens right inside Jira.

3. Automated workflow triggers

You can position AI agents at specific workflow statuses, and they automatically perform tasks when status transitions occur.

graph TD

A["Issue Created"] --> B["To Do"]

B --> C["AI Agent:<br/>Analyze Requirements"]

C --> D["In Progress<br/>(Developer)"]

D --> E["AI Agent:<br/>Draft Code Review"]

E --> F["Review<br/>(Senior Developer)"]

F --> G["Done"]Why MCP Matters — Breaking Free from Vendor Lock-in

Atlassian’s adoption of MCP isn’t merely a technical choice. It represents liberation from vendor lock-in.

Current MCP Integration Status

Through Atlassian’s hosted MCP servers, the following AI clients connect directly to Jira and Confluence:

| AI Client | Connection Method |

|---|---|

| Claude (Anthropic) | Native MCP |

| GitHub Copilot | MCP Integration |

| Gemini CLI (Google) | MCP Integration |

| Cursor | MCP Integration |

| Lovable | MCP Integration |

| WRITER | MCP Integration |

The Rovo MCP Gallery

Through Atlassian’s Rovo MCP Gallery, third-party tools like GitHub, Box, and Figma can run agents within Jira. What’s notable: approximately one-third of MCP usage is for write operations. This isn’t just data retrieval — agents are actually performing work.

Enterprise Adoption Status

- 93% of MCP usage originates from paid customers

- Enterprise accounts account for roughly half of all MCP operations

- Clear evidence of real-world production adoption

Five Things Engineering Managers Need to Prepare

1. Establish AI agent governance framework

When AI agents join your team, you need clear boundaries around permissions and accountability.

# AI Agent Governance Checklist

permissions:

- Define which projects agents can access

- Set criteria for write permission grants

- Require human approval for production-impacting tasks

audit:

- Establish monitoring schedule for agent activity logs

- Define anomaly detection criteria

- Create monthly agent performance review process

escalation:

- Plan fallback process when agents fail

- Define escalation triggers from agent to human

- Create procedures to halt agents in emergencies2. Redefine team roles

When AI agents take on repetitive work, your team’s responsibilities shift.

Before: Developers manually handle issue triage, draft code reviews, update documentation After: AI generates drafts, developers focus on validation and decision-making

As an engineering manager, it’s crucial to position this transition as opportunity, not threat. Design clear boundaries between work agents handle and work people handle, so your team can focus on higher-value activities.

3. Build a tool integration strategy around MCP

MCP is now a de facto standard. Though created by Anthropic, it was donated to the Linux Foundation, and is supported by OpenAI, Google, Microsoft, and AWS.

graph TD

subgraph "MCP Integration Architecture"

JiraMCP["Jira MCP Server"] ~~~ ConfMCP["Confluence MCP"]

JiraMCP ~~~ GitMCP["GitHub MCP"]

end

subgraph "AI Agent Layer"

Claude["Claude"] ~~~ Copilot["GitHub Copilot"]

Claude ~~~ Gemini["Gemini"]

end

subgraph "Workflows"

Sprint["Sprint Management"]

Review["Code Review"]

Docs["Documentation Automation"]

end

JiraMCP --> Sprint

GitMCP --> Review

ConfMCP --> Docs4. Create a phased rollout roadmap

Don’t try to change everything at once. Introduce agents in phases.

Phase 1 (1〜2 weeks): Start with read-only agents

- Automatic issue summaries, sprint report generation

- Risk: Low, Value: Immediate

Phase 2 (3〜4 weeks): Limited write agent deployment

- Issue labeling, priority suggestions

- Require human approval gates

Phase 3 (2 months+): Workflow automation

- Status transition triggered agents

- Integration with CI/CD pipelines

- Regular impact measurement and refinement

5. Design measurement metrics

You need quantifiable metrics to measure the success of AI agent adoption.

| Metric | Measurement | Goal |

|---|---|---|

| Issue triage time | Time from issue creation to first response | 50% reduction |

| Repetitive work ratio | Tasks handled by AI / Total tasks | 30%+ |

| Developer satisfaction | Monthly survey (1–5 scale) | 3.5+ |

| Agent accuracy | AI suggestion adoption rate | 70%+ |

| Sprint velocity change | Points per sprint | 20% improvement |

Real-World Scenario — How an EM’s Day Changes

Before: Traditional sprint management

09:00 - Issue triage (30 min)

09:30 - Standup prep (check team status, 15 min)

10:00 - Standup meeting

10:30 - Resolve blockers (coordinate with teams, 1 hour)

14:00 - Code review (1 hour)

15:00 - Sprint review prep (30 min)After: With AI agents

09:00 - Review AI triage results (10 min)

09:10 - Review AI-generated standup summary (5 min)

09:30 - Standup meeting (more productive with AI summary)

10:00 - Focus on strategic blocker resolution (AI provides pre-analysis)

14:00 - Code review based on AI draft (30 min)

14:30 - Use saved time for 1:1s and technical debt cleanupKey shift: Your role transforms from “task manager” to “decision maker.”

Important Caveats

AI agents are not omnipotent

- Agents are tools. Judgment remains a human responsibility

- Early on, agent output quality may be inconsistent. Establish validation processes

- Consider team psychological safety. Proactively address fears that “AI will replace my job”

Security and compliance

- Jira’s existing permission system is fully respected

- All agent activities are logged in audit trails

- Production changes always require human approval

- Agents operate in isolated sandbox environments per developer

Conclusion

Atlassian’s launch of Jira AI agents plus MCP adoption represents a paradigm shift in project management tooling. As MCP becomes the standard, the pace of AI agent integration across development tools will only accelerate.

As an engineering manager, your priorities are clear:

- Understand the MCP ecosystem and find the right AI agent combination for your team

- Design governance frameworks first, then implement

- Roll out gradually, paired with measurable metrics

- Position role transitions as opportunities for your team

2026 is the year AI agents move from demo to production. The change happening in Jira—a platform used by millions of teams—is the clearest signal of that shift.

References

Was this helpful?

Your support helps me create better content. Buy me a coffee! ☕