NVIDIA's NVFP4 Cuts LLM Inference Costs by 8x — While Maintaining Accuracy

How NVIDIA's NVFP4 quantization technology reduces LLM inference costs to 1/8 while preserving accuracy. We analyze the cost structure transformation from FP32 to FP4 with real-world benchmarks.

Overview

LLM inference costs have become the biggest bottleneck for enterprise AI adoption. GPU memory usage, power consumption, and hardware investment — costs scale exponentially as models grow larger. NVIDIA’s NVFP4 (4-bit floating point) quantization format has the potential to fundamentally change this equation.

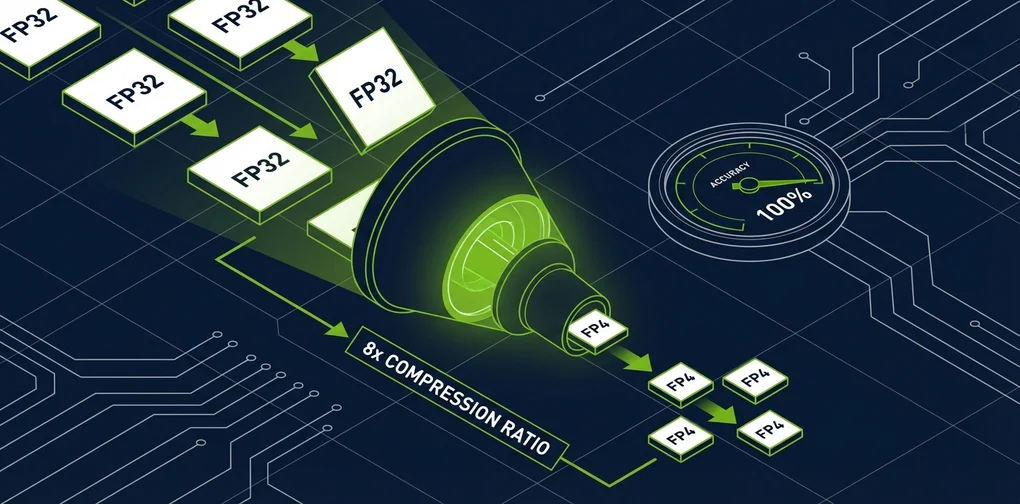

The transition from FP32 (32-bit) to FP4 (4-bit) means 8x memory reduction by simple arithmetic, and real-world benchmarks are achieving results close to this figure while minimizing accuracy loss.

This article analyzes NVFP4’s technical principles, actual performance data, and its impact on LLM operational cost structures.

What Is FP4 Quantization?

The History of Bit Reduction

The evolution of LLM quantization follows this timeline:

graph LR

A[FP32<br/>32-bit] --> B[FP16<br/>16-bit]

B --> C[INT8<br/>8-bit]

C --> D[FP8<br/>8-bit]

D --> E[FP4/NVFP4<br/>4-bit]

style E fill:#76B900,color:#fffAt each stage, the number of bits used to represent model weights decreases, reducing memory usage and computational costs. The key question is how much accuracy can be preserved.

NVFP4 Architecture

NVFP4 is a 4-bit floating-point format that NVIDIA supports at the hardware level starting from the Blackwell architecture. Unlike conventional INT4, it uses floating-point representation to maintain a wider dynamic range.

| Format | Bits | Memory vs FP32 | Dynamic Range | Hardware Support |

|---|---|---|---|---|

| FP32 | 32 | 1x | Very wide | All GPUs |

| FP16 | 16 | 2x | Wide | Most GPUs |

| FP8 | 8 | 4x | Moderate | Ada/Blackwell |

| NVFP4 | 4 | 8x | Moderate | Blackwell/Ada* |

*Ada Lovelace (RTX 4090, etc.) supported through community projects

Microscaling (MX) Format

One of NVFP4’s core innovations is Microscaling technology. This approach divides weights into small blocks and applies a separate scaling factor to each block.

Block size: 32 elements

Each block = [4-bit weights × 32] + [8-bit scale factor × 1]

Effective bits = 4 + (8/32) = 4.25 bits/elementThis method enables precise calibration of value distributions within each block even under extreme bit reduction, achieving significantly better accuracy compared to INT4.

Real-World Benchmarks: The AdaLLM Project

The AdaLLM project, which gained traction on Reddit’s r/LocalLLaMA community, published results from running NVFP4 on RTX 4090 (Ada Lovelace) GPUs.

Qwen3-8B NVFP4 Performance

| Batch Size | Total Tokens | Time (sec) | Throughput (tok/s) | VRAM (GB) |

|---|---|---|---|---|

| 1 | 128 | 3.39 | 37.79 | 7.55 |

| 4 | 512 | 3.44 | 148.87 | 7.55 |

| 8 | 1024 | 3.45 | 297.16 | 7.56 |

| 16 | 2048 | 4.36 | 469.34 | 7.56 |

Gemma3-27B NVFP4 Performance

| Batch Size | Total Tokens | Time (sec) | Throughput (tok/s) | VRAM (GB) |

|---|---|---|---|---|

| 1 | 128 | 9.40 | 13.62 | 19.83 |

| 4 | 512 | 9.53 | 53.70 | 19.84 |

Key findings:

- Qwen3-8B: 2.4x VRAM reduction vs FP16, ~20-25% throughput loss

- Gemma3-27B (27B parameters): Fits on a single RTX 4090 GPU

- Throughput loss comes from compute efficiency rather than memory, so cost efficiency improves with larger batch sizes

Cost Structure Transformation

GPU Memory Savings Impact

Here’s how FP4 quantization affects real operational costs across different scenarios.

graph TD

subgraph FP16["FP16 Operation"]

A1[70B Model] --> A2[140GB VRAM Required]

A2 --> A3[A100 80GB × 2]

A3 --> A4["Cost: ~$6/hour"]

end

subgraph FP4["NVFP4 Operation"]

B1[70B Model] --> B2[35GB VRAM Required]

B2 --> B3[A100 80GB × 1<br/>or RTX 4090]

B3 --> B4["Cost: ~$1.5/hour"]

end

style FP4 fill:#76B900,color:#fffCost Comparison Simulation

Estimated monthly operational costs for a 70B parameter model:

| Item | FP16 | NVFP4 | Reduction |

|---|---|---|---|

| GPU Count | 2× A100 | 1× A100 | 50% |

| Hourly Cost | ~$6.00 | ~$1.50 | 75% |

| Monthly Cost (24/7) | ~$4,320 | ~$1,080 | 75% |

| Power Consumption | ~600W | ~300W | 50% |

Compared to FP32, memory savings of up to 8x are achievable. Even against FP16, cost reductions approach 4x.

How Accuracy Is Preserved

MXFP4 vs Traditional INT4

The paper “Bridging the Gap Between Promise and Performance for Microscaling FP4 Quantization” (2025) provides detailed analysis of accuracy preservation mechanisms in MXFP4/NVFP4 formats.

Key techniques:

- Microscaling calibration: Independent scale factors for every 32 elements minimize value distribution distortion

- FP8 KV cache: Using FP8 for Key-Value cache preserves attention computation accuracy

- Layer-adaptive quantization: Sensitive layers maintain higher precision while less sensitive layers receive more aggressive quantization

- Calibration data-based optimization: Quantization parameters are tuned to reflect actual input data distributions

Quality Validation Results

In community benchmarks, NVFP4 models demonstrate:

- Perplexity increase: Within 1-3% of FP16

- Downstream tasks: Within 1-2% performance difference on MMLU, HellaSwag, etc.

- Coding benchmarks: Practical performance levels maintained on HumanEval

Practical Implementation Guide

Running NVFP4 Models with AdaLLM

# Installation

pip install git+https://github.com/BenChaliah/NVFP4-on-4090-vLLM.git

# Serve Qwen3-8B NVFP4 model

adallm serve nvidia/Qwen3-8B-NVFP4

# Enable FP8 GEMM path (optional)

export NVFP4_FP8=1

adallm serve nvidia/Qwen3-8B-NVFP4Supported Models

Currently supported NVFP4 models in AdaLLM:

- nvidia/Qwen3-8B-NVFP4: 8B parameters, 7.5GB VRAM on RTX 4090

- Gemma3-27B-it-NVFP4: 27B parameters, 19.8GB VRAM on RTX 4090

- Qwen3 MoE variants: Supported but optimization is still in progress

Production Deployment Considerations

graph TD

A[NVFP4 Adoption Review] --> B{Model Size?}

B -->|Under 8B| C[Single RTX 4090<br/>Cost Optimal]

B -->|8B-30B| D[RTX 4090 or<br/>Single A100]

B -->|Over 30B| E[A100/H100<br/>Multi-GPU]

C --> F{Accuracy Requirements?}

D --> F

E --> F

F -->|High| G[FP8 KV Cache + NVFP4<br/>Layer-wise Mixed Precision]

F -->|Standard| H[Pure NVFP4<br/>Maximum Cost Savings]

style G fill:#76B900,color:#fff

style H fill:#76B900,color:#fffFuture Outlook

Blackwell Architecture’s Native FP4 Support

NVIDIA’s Blackwell GPUs (B100, B200) provide native hardware-level FP4 support. Unlike the current software-based implementation on Ada Lovelace, Blackwell offers:

- Additional performance gains through dedicated FP4 tensor cores

- FP4 computation without throughput loss

- Larger models fitting on single GPUs

Industry Impact

The widespread adoption of FP4 quantization will drive several key changes:

- LLM service price drops: API-based LLM service pricing could fall to 1/4–1/8 of current levels

- Edge device deployment: 70B models running on consumer GPUs will accelerate on-premises LLM adoption

- Lower startup barriers: Initial investment costs for operating high-performance LLMs will decrease significantly

- Environmental impact: Reduced GPU power consumption will shrink AI industry’s carbon footprint

Conclusion

NVIDIA’s NVFP4 quantization technology has the potential to fundamentally transform LLM inference cost structures. Achieving 8x memory reduction versus FP32 and 4x versus FP16 while maintaining practical accuracy levels makes this not just an optimization but a paradigm shift.

The fact that community projects like AdaLLM have proven NVFP4 can run practically on RTX 4090 demonstrates that this technology delivers real value not only for data centers but also for individual developers and small teams.

As Blackwell architecture becomes widely available from 2026 onward, FP4 quantization is likely to become the new standard for LLM operations.

References

- AdaLLM: NVFP4-first inference on RTX 4090 — GitHub

- Bridging the Gap Between Promise and Performance for Microscaling FP4 Quantization — arXiv

- Reddit r/LocalLLaMA Community Discussion — Benchmarks and user feedback

- NVIDIA Blackwell Architecture — Official documentation

Was this helpful?

Your support helps me create better content. Buy me a coffee! ☕