Bayesian Teaching: How LLMs Learn Probabilistic Reasoning

Google's Bayesian Teaching research, published in Nature Communications, introduces a training methodology that enables LLMs to probabilistically update their beliefs when receiving new information. This post analyzes its implications for AI agents and enterprise systems from an engineering leadership perspective.

Modern LLMs are remarkably capable, but they share a fundamental weakness: the longer a conversation runs — or the more new information is provided — the worse they become at rationally updating their “beliefs.” A user might say, “Actually, I prefer window seats,” and the next recommendation might not reflect this at all.

Modern LLMs are remarkably capable, but they share a fundamental weakness: the longer a conversation runs — or the more new information is provided — the worse they become at rationally updating their “beliefs.” A user might say, “Actually, I prefer window seats,” and the next recommendation might not reflect this at all.

A research team from Google Research and MIT addressed this directly in a paper published in Nature Communications: “Bayesian Teaching Enables Probabilistic Reasoning in Large Language Models.” The core idea is as elegant as it is powerful: instead of training LLMs to memorize correct answers, train them to mimic the probabilistic reasoning process of a mathematically optimal Bayesian model.

The Problem with LLM Probabilistic Reasoning

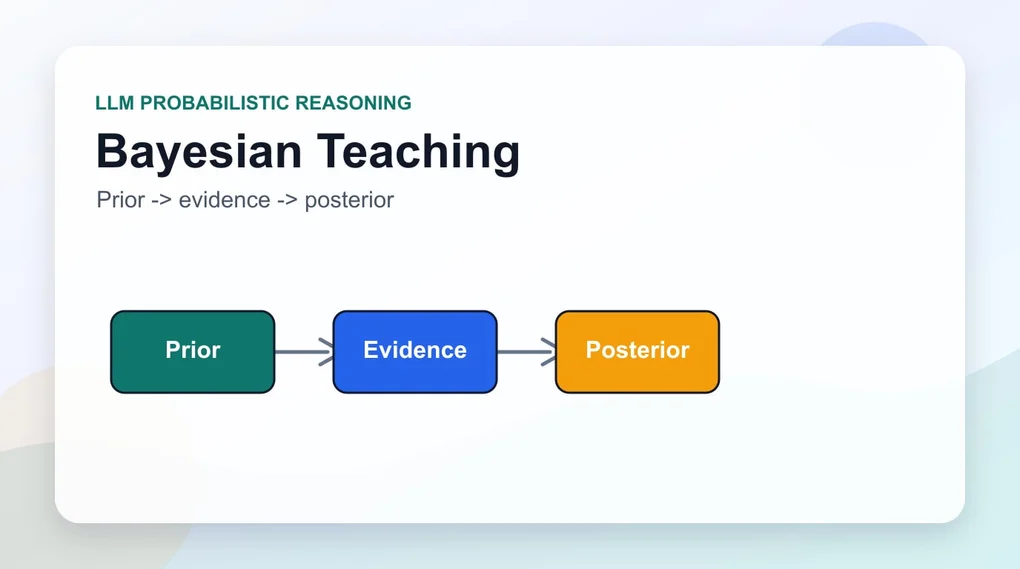

Humans reason in a Bayesian manner naturally. “It rained yesterday, so today is probably overcast too” — we continuously update prior beliefs with new evidence to arrive at posterior probabilities.

Standard LLMs, by contrast, are notoriously poor at this kind of incremental belief updating. In the research team’s experiments, LLMs showed a tendency for their performance to plateau after a single interaction. Even after receiving multiple rounds of user feedback, their responses didn’t meaningfully improve beyond the initial level.

For AI agents and recommendation systems, this is a critical failure mode. An agent that can’t learn a user’s actual preferences after dozens of conversations provides diminishing value over time.

Bayesian Teaching: The Core Solution

The research team compared two training strategies:

Oracle Teaching: The model learns behavioral patterns from a “perfect” assistant that always selects the correct option. The LLM effectively memorizes what the right answer is for a given situation.

Bayesian Teaching: The model is trained to mimic the probabilistic predictions of a mathematically optimal Bayesian assistant. Rather than learning the final answer, it learns the intermediate reasoning process — “given the current evidence, what is the probability of each option?”

The results were clear. Models trained with Bayesian Teaching consistently outperformed Oracle Teaching, achieving approximately 80% agreement with the Bayesian assistant’s predictions.

Even more impressive was the generalization capability. A model trained on flight recommendation data successfully applied its Bayesian reasoning skills to hotel reservations and real-world web shopping — domains it had never encountered during training.

Why This Matters: The Transferability of Reasoning Skills

The most significant finding is the transferability of reasoning skills.

Traditional LLM training tends to focus on memorizing domain-specific knowledge. Bayesian Teaching is fundamentally different: it teaches the model to internalize reasoning principles themselves, independent of domain.

Training Domain: Flight recommendations

↓ (Bayesian Teaching)

Acquired Capability: Probabilistic belief-update principles

↓ (Zero-shot generalization)

Applied Domains: Hotel booking / Shopping / Medical diagnosis / Legal research...This is analogous to developing strong mathematical reasoning: once you have it, it applies across physics, economics, engineering, and beyond.

Practical Implications: An Engineering Leadership Perspective

For Engineering Managers and CTOs, this research carries several concrete implications.

1. Rethinking AI Agent Architecture

Most current AI agent systems operate purely on RAG (Retrieval-Augmented Generation) or tool calls. Bayesian Teaching opens the door to agents that form increasingly accurate user models through interaction history.

Consider an enterprise HR system where an AI agent refines its understanding of a hiring manager’s candidate preferences over time, or a project management tool that learns a team’s workflow patterns and adjusts its recommendations accordingly.

2. Enabling Uncertainty Quantification

At the heart of Bayesian reasoning is the ability to express uncertainty numerically — “Option A fits your profile at 70% confidence.” Current LLMs are notoriously poorly calibrated in this regard. Bayesian Teaching can improve this directly. Verbalized Sampling research, which explores how LLMs express probability distributions through language, offers a complementary perspective on the same challenge.

In enterprise decision-support systems, the difference between “82% confident” and “51% confident” is operationally significant — it determines whether a human reviews the recommendation or relies on it automatically.

3. A New Fine-tuning Paradigm

This suggests moving away from Oracle-style fine-tuning (supervised learning on labeled correct-answer datasets) toward pipelines that generate synthetic training data by sampling a Bayesian model’s intermediate reasoning steps.

This is also compelling from a cost perspective: instead of paying large teams to label correct answers, you can generate mathematically-grounded synthetic data from a Bayesian model — a more scalable and principled approach.

Limitations and Caveats

This research is not a silver bullet.

Computational Cost: Computing real-time Bayesian predictions or generating large-scale synthetic training data is computationally expensive.

Complexity of Real-World Preferences: Human preferences are far more ambiguous and multi-dimensional than the structured flight or hotel recommendation scenarios used in the experiments. Formalizing them as Bayesian models is itself a research challenge.

Need for Scale Validation: The experiments focused on specific recommendation tasks. Further validation is needed across language understanding, coding, and multi-step agentic tasks before broad conclusions can be drawn.

Looking Ahead

This research demonstrates that LLMs can evolve beyond simple pattern matchers into genuine probabilistic reasoning engines. The most promising near-term applications include:

- Long-horizon dialogue agents: Systems that continuously refine their user models over dozens or hundreds of interactions

- Medical diagnosis support: AI that accumulates symptom and test data to reason toward the most probable diagnosis

- Financial risk analysis: Systems that dynamically update portfolio risk assessments as new market data arrives

Given Gartner’s warning that over 40% of agentic AI projects will be canceled by 2027 — largely due to failure to operationalize agents reliably — foundational reasoning capability research like MIT’s self-curriculum approach and Bayesian Teaching represent exactly the kind of improvements needed to close the gap between promising demos and production-grade systems.

Closing Thoughts

As an Engineering Manager, I know that foundational research like this typically takes 2〜3 years to find its way into production products. But understanding the direction matters for the architectural decisions we make today.

When designing an AI agent right now, ask yourself: “Does this system update its model of the user based on their feedback?” If Bayesian Teaching shows us how to internalize this capability at training time, the wise move today is to design systems with the architectural space to incorporate it.

When LLMs that genuinely reason probabilistically arrive, AI agents will become true learning partners — not just smart autocomplete.

References:

Was this helpful?

Your support helps me create better content. Buy me a coffee.