DeNA LLM Study Part 3: Model Training Methodologies - From Pre-training to RLHF/DPO

Deep dive into pre-training, fine-tuning, and reinforcement learning based on DeNA LLM study materials Part 3, exploring efficient techniques like LoRA, QLoRA, and DPO.

Series: DeNA LLM Study (3/5)

- Part 1: LLM Fundamentals and 2025 AI Landscape

- Part 2: Structured Output and Multi-LLM Pipelines

- Part 3: Model Training Methodologies ← Current Article

- Part 4: RAG Architecture and Latest Trends

- Part 5: Agent Design and Multi-Agent Orchestration

Introduction

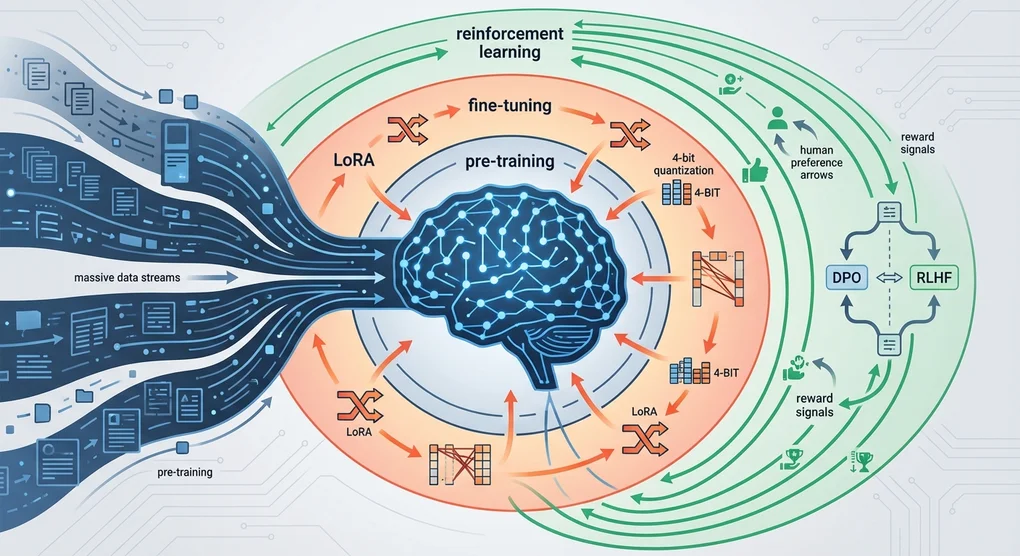

DeNA’s LLM study materials Part 3 covers diverse learning methodologies for LLMs. We’ll explore the differences between pre-training, fine-tuning, and reinforcement learning, and examine the principles and practical applications of cutting-edge efficient training techniques like LoRA, QLoRA, and DPO.

This post is based on DeNA’s study materials, enhanced with 2025 trends and hands-on experience.

Pre-training vs Fine-tuning vs Reinforcement Learning

Understanding Through Restaurant Analogy

DeNA materials explain the three learning approaches through a restaurant operation metaphor:

graph TD

A[Pre-training<br/>Pre-training] --> B[Fine-tuning<br/>Fine-tuning]

B --> C[Reinforcement Learning<br/>RLHF/DPO]

A1[Chef Basic Training<br/>Learn All Cuisines] --> A

B1[Specific Restaurant<br/>Menu Specialization] --> B

C1[Customer Feedback<br/>Taste Improvement] --> CPre-training

- Purpose: Acquire general language understanding capabilities

- Data: Tens to hundreds of TBs of web data

- Cost: Hundreds of millions to billions of dollars (GPT-4 estimated $100B+)

- Analogy: Learning all cooking techniques in culinary school

Fine-tuning

- Purpose: Specialize for specific tasks/domains

- Data: Thousands to tens of thousands of task-specific examples

- Cost: Hundreds to thousands of dollars

- Analogy: Becoming a pasta specialist at an Italian restaurant

Reinforcement Learning

- Purpose: Generate responses aligned with human preferences

- Data: Thousands to tens of thousands of preference pairs

- Cost: Thousands to tens of thousands of dollars

- Analogy: Adjusting dish flavors based on customer feedback

Practical Decision-Making Guide

graph TD

Start[Need LLM Training?] --> Q1{New Knowledge<br/>Required?}

Q1 -->|Yes| PreTrain[Pre-training<br/>Cost: Very High]

Q1 -->|No| Q2{Task-Specific<br/>Needed?}

Q2 -->|Yes| FineTune[Fine-tuning<br/>Cost: Medium]

Q2 -->|No| Q3{Preference<br/>Alignment?}

Q3 -->|Yes| RL[Reinforcement Learning<br/>Cost: Medium]

Q3 -->|No| Prompt[Prompt Engineering<br/>Cost: Low]Decision Checklist:

- Can it be solved with prompts? → Try prompt optimization first

- Does the existing model understand the task? → Yes: RL, No: Fine-tuning

- Is it a completely new domain? → Consider pre-training (but watch costs)

PEFT: The Rise of Efficient Fine-tuning

Problems with Traditional Fine-tuning

Limitations of Full Fine-tuning that updates all parameters:

- Memory Usage: Fine-tuning a 7B model requires 80GB+ VRAM

- Time Cost: Takes hours to days

- Deployment Challenges: Need to store entire model per task (tens of GBs)

Core Idea of PEFT

Parameter-Efficient Fine-Tuning (PEFT) maximizes efficiency by training only a subset of parameters:

graph TD

subgraph Traditional_Fine-tuning

A[Original Model<br/>7B Parameters] --> B[Full Update<br/>7B Parameters]

B --> C[New Model<br/>28GB Storage]

end

subgraph PEFT

D[Original Model<br/>7B Parameters] --> E[Add Few Parameters<br/>Millions]

E --> F[Store Adapter Only<br/>Under 10MB]

endMajor PEFT Methods:

- Adapter: Insert small networks between layers

- Prefix Tuning: Add trainable prefixes to inputs

- LoRA: Update via low-rank decomposition (most popular)

- Prompt Tuning: Train only soft prompts

LoRA: Principles of Low-Rank Adaptation

Mathematical Background

LoRA (Low-Rank Adaptation) is based on the following mathematical insight:

# Original weight update (Full Fine-tuning)

W_new = W_original + ΔW # ΔW is d×d size

# LoRA's low-rank decomposition

ΔW = B @ A # B is d×r, A is r×d (r << d)

# Practical application

output = (W_original + B @ A) @ inputCore Idea:

- Pre-trained weights already contain abundant information

- The change amount (ΔW) needed for fine-tuning has low intrinsic dimensionality

- Therefore, ΔW can be expressed as the product of two small matrices (B, A)

LoRA Hyperparameter Configuration Guide

# LoRA configuration example (HuggingFace PEFT)

lora_config:

r: 8 # Rank (intrinsic dimension)

lora_alpha: 16 # Scaling parameter

lora_dropout: 0.1 # Dropout rate

target_modules: # Layers to apply

- q_proj # Query projection

- v_proj # Value projection

bias: "none" # Whether to train biasHyperparameter Selection Guide:

| Parameter | Recommended | Description |

|---|---|---|

| r (Rank) | 4〜16 | Smaller saves memory, larger increases expressiveness. 8 works for most cases |

| lora_alpha | r〜2r | Acts like learning rate. Usually 1〜2x of r |

| lora_dropout | 0.05〜0.1 | Prevents overfitting. Set higher for small datasets |

| target_modules | q_proj, v_proj | Query/Value in Attention are most effective |

LoRA Variants

DoRA (Weight-Decomposed Low-Rank Adaptation, 2024)

# DoRA: Decompose weights into magnitude and direction

W = m * (V + B @ A)

# m: trainable magnitude, V: normalized weights, B@A: LoRA- Advantage: Performance closer to Full Fine-tuning

- Disadvantage: Slightly slower than LoRA

GaLore (Gradient Low-Rank Projection, 2024)

# Project gradient to low-rank space to save memory

gradient_lowrank = project_to_lowrank(gradient)

optimizer.step(gradient_lowrank)- Advantage: Compress optimizer states too → 50% additional memory savings

- Disadvantage: High implementation complexity

LoRA+ (2024)

# Apply different learning rates to matrices A and B

lr_A = lr * eta # Higher learning rate for A

lr_B = lr # Default learning rate for B- Advantage: 1.5〜2x convergence speed improvement

- Disadvantage: Requires hyperparameter tuning

QLoRA: Combining Quantization with PEFT

Innovation of 4-bit Quantization

QLoRA combines 4-bit quantization with LoRA to dramatically reduce memory usage:

graph TD

subgraph Memory_Comparison

A[Original 16bit<br/>14GB] --> B[8bit Quantization<br/>7GB]

B --> C[4bit QLoRA<br/>3.5GB]

end

subgraph Performance_Retention

D[Full Fine-tuning<br/>100%] --> E[LoRA<br/>98%]

E --> F[QLoRA<br/>97%]

endQLoRA Core Technologies:

- 4bit NormalFloat (NF4): Quantization optimized for normal distributions

- Double Quantization: Quantize quantization constants too

- Paged Optimizers: Automatic CPU-GPU memory management

QLoRA Practical Workflow

from transformers import AutoModelForCausalLM, BitsAndBytesConfig

from peft import LoraConfig, get_peft_model

# 1. 4-bit quantization configuration

bnb_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4", # NormalFloat 4bit

bnb_4bit_compute_dtype="float16", # Compute in float16

bnb_4bit_use_double_quant=True, # Double quantization

)

# 2. Load model

model = AutoModelForCausalLM.from_pretrained(

"meta-llama/Llama-2-7b-hf",

quantization_config=bnb_config,

device_map="auto" # Automatic device allocation

)

# 3. LoRA configuration

lora_config = LoraConfig(

r=8,

lora_alpha=16,

target_modules=["q_proj", "v_proj"],

lora_dropout=0.1,

bias="none",

task_type="CAUSAL_LM"

)

# 4. Create PEFT model

model = get_peft_model(model, lora_config)

# 5. Check trainable parameters

trainable_params = sum(p.numel() for p in model.parameters() if p.requires_grad)

print(f"Trainable parameters: {trainable_params:,} ({trainable_params/7e9*100:.2f}%)")

# Output: Trainable parameters: 4,194,304 (0.06%)QLoRA Practical Tips:

- GPU Memory: Train 7B model on single RTX 3090 (24GB)

- Batch Size: Use gradient accumulation (e.g., batch_size=1, gradient_accumulation_steps=16)

- Training Time: 1.5〜2x slower than Full Fine-tuning (quantization overhead)

RLHF and DPO: Learning Human Preferences

Complexity of RLHF

Reinforcement Learning from Human Feedback (RLHF) is powerful but complex:

graph TD

A[1. Train SFT Model<br/>Supervised Fine-tuning] --> B[2. Train Reward Model<br/>Reward Model]

B --> C[3. Optimize Policy with PPO<br/>Proximal Policy Optimization]

D[Human Preference Data<br/>A vs B Comparison] --> B

B --> E[Reward Score Prediction]

E --> C

C --> F[Final Aligned Model<br/>Aligned Model]RLHF Problems:

- 3-stage Pipeline: SFT → Reward Model → RL Optimization

- Instability: PPO is sensitive to hyperparameters

- High Cost: Reward model training + RL sampling

- Difficult Debugging: Hard to diagnose RL convergence failures

DPO: Direct Preference Optimization

Direct Preference Optimization (DPO) learns human preferences directly without a reward model:

graph TD

A[Human Preference Data<br/>Preferred vs Rejected] --> B[DPO Loss Function<br/>Classification Loss]

B --> C[Aligned Model<br/>Single-stage Training]

D[RLHF: 3 Stages] -.-> E[SFT → Reward → PPO]

F[DPO: 1 Stage] -.-> CDPO Loss Function:

# DPO Loss (simplified formula)

loss = -log(σ(β * (log π(y_w|x) - log π(y_l|x))))

# y_w: Preferred response (chosen)

# y_l: Rejected response (rejected)

# β: Hyperparameter (typically 0.1)

# σ: Sigmoid functionDPO Advantages:

- Simplicity: No reward model needed, single training stage

- Stability: Classification loss is more stable than PPO

- Efficiency: 50% reduction in memory and time

- Performance: Equal or better performance than RLHF

DPO Practical Implementation

from trl import DPOTrainer

# DPO training configuration

training_args = TrainingArguments(

output_dir="./dpo_model",

per_device_train_batch_size=4,

learning_rate=5e-5,

num_train_epochs=3,

gradient_accumulation_steps=4,

)

# Initialize DPO Trainer

dpo_trainer = DPOTrainer(

model=model,

args=training_args,

train_dataset=preference_dataset, # (prompt, chosen, rejected) format

tokenizer=tokenizer,

beta=0.1, # DPO hyperparameter

)

# Run training

dpo_trainer.train()Preference Data Format:

preference_dataset = [

{

"prompt": "How to sort a list in Python?",

"chosen": "Use the sorted() function: sorted([3,1,2])",

"rejected": "Just use sort()"

},

# ...

]DPO Variants

ORPO (Odds Ratio Preference Optimization, 2024)

- Performs SFT and preference learning simultaneously

- No separate SFT stage needed

- Further training time reduction

IPO (Identity Preference Optimization, 2024)

- Can train without reference model

- Further memory reduction

KTO (Kahneman-Tversky Optimization, 2024)

- Uses individual feedback (good/bad) instead of pairwise comparisons

- Drastically reduced data collection costs

Task-Specific Training Method Selection Guide

Cost-Performance Tradeoff

graph TD

A[Analyze Task Type] --> B{General<br/>Knowledge OK?}

B -->|Yes| C[Prompt Engineering<br/>Cost: $0]

B -->|No| D{Domain-Specific<br/>Needed?}

D -->|Yes| E{Data Size}

E -->|Small| F[Few-shot ICL<br/>Cost: $0]

E -->|Medium| G[LoRA/QLoRA<br/>Cost: $10~100]

E -->|Large| H[Full Fine-tuning<br/>Cost: $1,000~10,000]

D -->|No| I{Response Quality<br/>Improvement?}

I -->|Yes| J[DPO/ORPO<br/>Cost: $100~1,000]Practical Recommendations

1. Chatbots/Conversational Systems

Prompt → SFT (LoRA) → DPO- Domain knowledge injection: Efficient fine-tuning with LoRA

- Dialogue quality improvement: Preference alignment with DPO

2. Document Classification/Tagging

Prompt → LoRA (Optional)- Usually sufficient with prompts

- Add LoRA for extreme performance needs

3. Code Generation

Prompt → SFT (QLoRA) → RLHF/DPO- Code style learning: Train on large code corpus with QLoRA

- Executability improvement: Penalize compilation errors with RLHF

4. Summarization/Translation

Prompt → DPO- Base model often sufficient

- Style adjustment: Learn desired tone/length with DPO

Memory Requirements Comparison

| Method | 7B Model | 13B Model | 70B Model |

|---|---|---|---|

| Full Fine-tuning | 80GB | 160GB | 800GB+ |

| LoRA | 40GB | 80GB | 400GB |

| QLoRA | 24GB | 40GB | 200GB |

Consumer GPU Viability:

- RTX 4090 (24GB): Can train 7B with QLoRA, 3B with LoRA

- RTX 3090 (24GB): Can train 7B with QLoRA

- RTX 4060 Ti (16GB): Can train 3B with QLoRA

Insights and Reflections

Democratization of LLM Fine-tuning

The most impressive aspect of DeNA materials was that LLM fine-tuning is no longer exclusive to large corporations. With the advent of QLoRA and DPO:

- Fine-tune 7B models with 24GB VRAM

- Build domain-specific models on hundreds of dollars budget

- Use simple DPO instead of complex RLHF

Paradigm Shift in Efficiency

Recently, Efficiency has become a trending topic:

- LoRA: 98% of Full Fine-tuning performance with 0.1% parameters

- QLoRA: Same performance with 1/4 memory

- DPO: Equal performance with 1/3 of RLHF complexity

This isn’t just optimization but the result of novel mathematical insights. Low-rank hypotheses, quantization theory, implicit reward models—academic research is rapidly transitioning to practice.

Lessons for Practitioners

- Start with prompts: 80% can be solved with prompts

- LoRA as default: Try LoRA first when fine-tuning is needed

- Save resources with QLoRA: Minimal performance difference, 4x memory savings

- Align with DPO: RLHF is legacy, DPO is the new standard

- Measure and improve: Focus on actual task performance over benchmark scores

2025 Outlook

Expected trends:

- Smaller yet powerful models: Rise of compact models like Phi-3, Gemma 2

- On-device fine-tuning: Era of fine-tuning on smartphones

- Automated hyperparameter tuning: AutoML for LLM Fine-tuning

- Multimodal PEFT: Simultaneous image+text fine-tuning

References

Papers

- LoRA: Low-Rank Adaptation of Large Language Models (Microsoft, 2021)

- QLoRA: Efficient Finetuning of Quantized LLMs (University of Washington, 2023)

- Direct Preference Optimization (Stanford, 2023)

- DoRA: Weight-Decomposed Low-Rank Adaptation (NVIDIA, 2024)

- GaLore: Memory-Efficient LLM Training (CMU, 2024)

Libraries

- HuggingFace PEFT - LoRA, QLoRA implementation

- HuggingFace TRL - RLHF, DPO implementation

- Unsloth - 2x faster LoRA training

Tutorials

Coming Next: “DeNA LLM Study Part 4: Production Deployment and Monitoring” will cover strategies for deploying fine-tuned models to actual services, monitoring methods, and cost optimization techniques.

Was this helpful?

Your support helps me create better content. Buy me a coffee.