Claude Code Review — Multi-Agent PR Reviews Jump Coverage from 16% to 54%

Complete breakdown of Anthropic's new Code Review feature for Claude Code: parallel multi-agent architecture, $15–25 per-PR cost structure, and everything Engineering Managers need to know before adopting

On March 9, 2026, Anthropic quietly published a blog post that sent ripples through the engineering world. Claude Code Code Review — a feature that automatically deploys a team of AI agents on every pull request to catch bugs and security issues.

The numbers speak for themselves. In internal testing at Anthropic, the share of PRs receiving substantive review comments jumped from 16% to 54% with this single feature. This post breaks down how it works, what it costs, and how Engineering Managers should think about adoption.

Why Now — The Explosion of AI-Generated Code

In 2026, with AI coding tools everywhere, teams are producing code at rates that human reviewers simply cannot keep pace with. A team using Claude Code aggressively might see a single developer commit dozens of times in a day. The inevitable result: many PRs merge without meaningful review, and subtle bugs introduced by AI sail straight to production.

According to Anthropic’s data, on large PRs (1,000+ lines), Code Review found an average of 7.5 issues. Developers marked fewer than 1% of suggestions as incorrect.

How It Works — Parallel Agent Teams

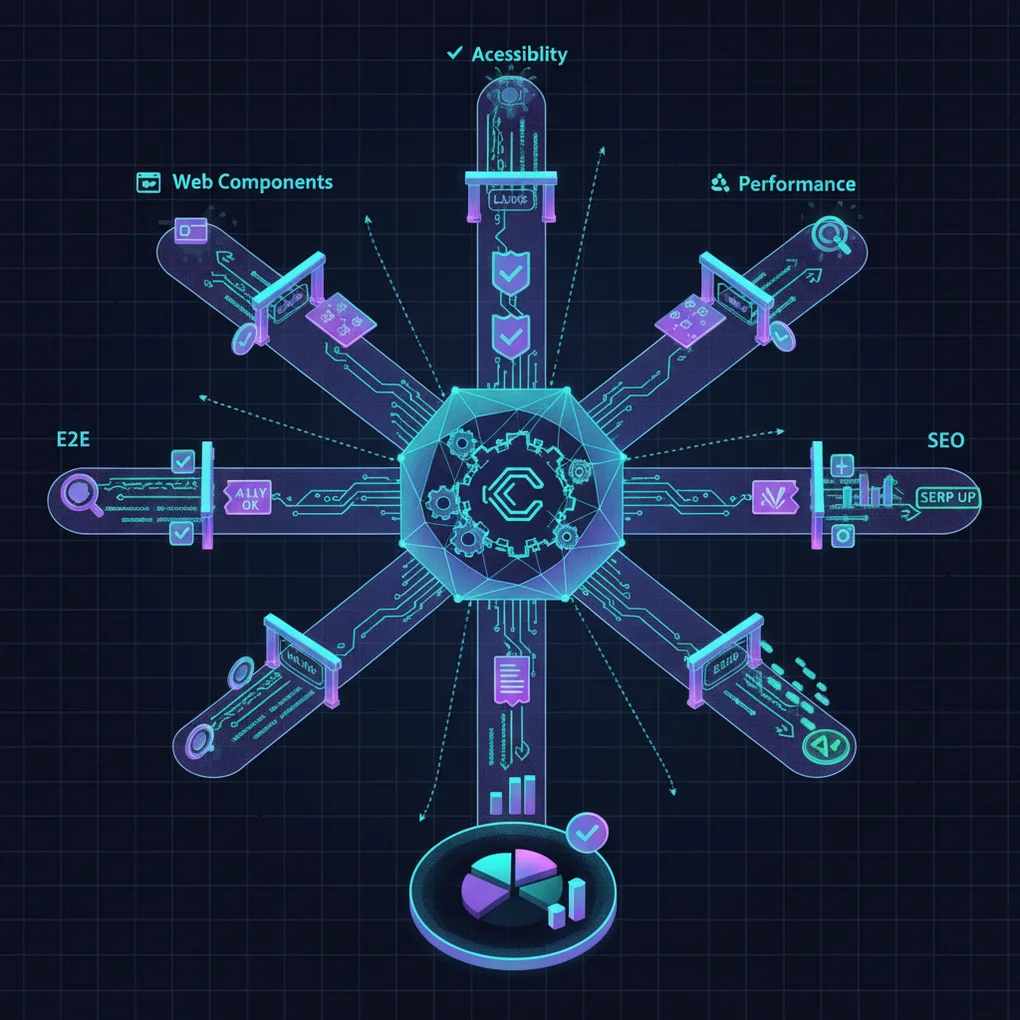

Unlike traditional AI review tools that have a single model read through the PR, Claude Code Review operates as a genuine team structure:

PR Received

│

├── Agent A: Logic error detection

├── Agent B: Security vulnerability analysis

├── Agent C: Performance regression check

└── Agent D: Test coverage review

│

└── Aggregator agent: Dedup + severity ranking

│

└── Final review comment (PR overview + inline annotations)Agents run in parallel, and an aggregator agent consolidates results, removes duplicates, and sorts by severity. Developers see the most critical issues first. For teams looking to apply similar parallel agent execution patterns directly in their workflows, Claude Code Agentic Workflow Patterns — 5 Types provides concrete implementation examples.

Average time per review: ~20 minutes. This is a deliberate design choice: depth over speed.

Cost Structure

| Item | Details |

|---|---|

| Billing | Token-based |

| Average cost | $15–25 per PR |

| Large PRs (1,000+ lines) | Can exceed $25 |

| Small PRs (under 50 lines) | Under $5 |

| Spending controls | Monthly caps available |

| Repository-level enablement | Supported |

Critically, cost controls are robust. You can set monthly spending caps, enable Code Review per-repository, and track usage via analytics dashboards.

If a developer’s code review time costs $50 per hour, spending $20 per PR to reduce that load is economically rational for plenty of teams.

Performance Metrics

Anthropic’s published internal data:

- Large PRs (1,000+ lines): 84% received findings, averaging 7.5 issues

- Small PRs (under 50 lines): 31% received findings, averaging 0.5 issues

- False positive rate: Developers marked suggestions as incorrect less than 1% of the time

- Review coverage: PRs with substantive comments went from 16% → 54%

A sub-1% false positive rate is remarkable. Legacy static analysis tools routinely hit false positive rates in the double digits — leaving developers numb to alerts. The actual developer experience here should be significantly better.

What Engineering Managers Need to Know

When Does Adoption Make Sense?

High-impact scenarios:

- Teams heavily using AI coding tools: Volume is up but reviewer bandwidth hasn’t scaled

- Security-sensitive codebases: Financial, healthcare, auth-related PRs need extra validation

- Frequent large PRs (1,000+ lines): Where human reviewers are most likely to miss things

Lower-impact scenarios:

- Small teams with strong review culture where reviewers already provide thorough coverage

- PR-light workflows where small PRs dominate (costs accumulate even at under $5)

Cost-Benefit Calculation

Daily PRs × Average cost × Working days = Monthly estimate

Example:

- Team size: 10 developers

- Average daily PRs: 20

- Average cost: $20/PR

- Monthly cost: 20 × $20 × 22 days = $8,800The key question: does avoiding even one production bug — with its associated debugging, hotfix deployment, and incident response — exceed $8,800? For most teams, it does.

Rollout Strategy

- Pick a pilot repository: Start with a high-complexity repo where large PRs are frequent

- Set a monthly budget cap: Start under $500 for the first 1–2 months to understand patterns

- Monitor false positives: Track how often developers dismiss suggestions as incorrect

- Expand: Roll out to all repositories after validating ROI

Positioning Against Existing Tools

| Tool | Character | Difference from Claude Code Review |

|---|---|---|

| SonarQube/ESLint | Static analysis (rule-based) | Rules without contextual understanding |

| Copilot PR Summary | Summary-focused | Describes changes, doesn’t find bugs |

| GitHub Advanced Security | Security scanning | Weaker on logic errors |

| Claude Code Review | Deep multi-agent review | Complements all of the above |

Claude Code Review isn’t positioned as a replacement — it’s a complement. Keep your SonarQube, keep your security scanning, and add a semantic analysis layer on top.

Availability and Roadmap

Currently available as a Research Preview for Team and Enterprise plan users, operating through GitHub integration. GitLab support is planned for a future expansion. Teams already running a GitHub Actions + Claude Code PR auto-review pipeline can layer Code Review on top for compounding coverage across both automation and deep semantic analysis.

As a Research Preview, both features and pricing may change before General Availability.

Closing Thoughts

AI-generated code reviewed by AI — this is the new reality of engineering in 2026. It’s not a perfect solution, but a jump from 16% to 54% review coverage is a number that’s difficult to dismiss.

Whether to adopt depends on your team’s PR patterns, code complexity, and the cost of a single production bug. Start with a pilot on one critical repository, gather data, and decide from there.

References:

Was this helpful?

Your support helps me create better content. Buy me a coffee.